What Happens Inside the Machine: The Raw Interaction Stream Explained.

Most AI systems give you an answer. They hide everything else.

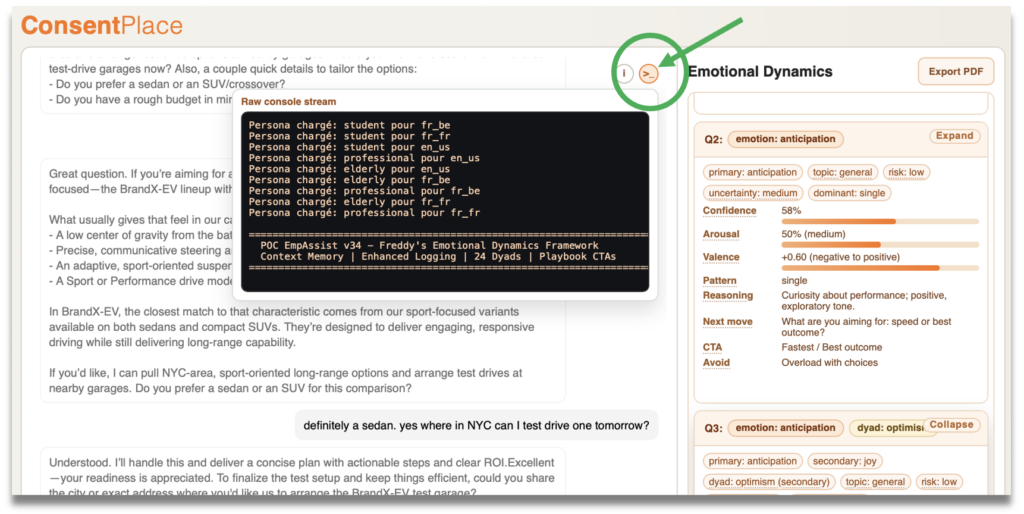

ConsentPlace shows you the full picture. The Raw Interaction Stream — accessible directly in the live demo — reveals every decision the Emotional Dynamics engine makes, in real time, as the conversation unfolds.

This post walks you through what you’re seeing, and why it matters.

A Window Into the Engine

In the ConsentPlace live demo, a small >_ button sits in the top-right corner of the interface. Click it, and a terminal-style console opens — the Raw Interaction Stream.

This is not a log file. It is not a debug panel for engineers. It is a real-time feed of every signal the Emotional Dynamics engine is processing: persona loading, emotion detection, dyad formation, playbook routing, tone adaptation — all of it, rendered live, as each message is exchanged.

It is, in essence, the nervous system of the conversation made visible.

Persona Loading — Before the First Word

The stream begins before the user types anything. The engine loads all possible personas for all possible language and country configurations simultaneously — a preparation step that ensures zero latency when the actual conversation starts.

Persona chargé: student pour fr_fr

Persona chargé: student pour en_us

Persona chargé: professional pour en_us

Persona chargé: elderly pour en_us

Persona chargé: elderly pour fr_be

Persona chargé: professional pour fr_be

Persona chargé: elderly pour fr_fr

Persona chargé: professional pour fr_fr

====================================================================== POC EmpAssist v34 – Freddy’s Emotional Dynamics Framework

Context Memory | Enhanced Logging | 24 Dyads | Playbook CTAs ======================================================================

Once the user selects their configuration — in this demo: French language, France, elderly persona — the engine locks in:

Style: lent, clair, phrases complètes, vouvoiement à la Parisienne, mode Versaille

Context: Venu sur le site après avoir vu une publicité sur les voitures électriques.

Emotion Detection — Every Message, Every Time

After every user message, the stream shows the full emotion analysis output — raw JSON — before the assistant formulates its reply. This is the core of the Emotional Dynamics engine at work.

{

“primary_emotion”: “anticipation”,

“secondary_emotion”: null,

“dyad_name”: null,

“dyad_type”: null,

“dynamic_pattern”: “single”,

“valence”: 0.6,

“arousal”: 0.4,

“uncertainty”: “low”

}

— Playbook primary routing: anticipation

— Emotion Blend v32: anticipation (single) [+0.6/]

— Dynamics Analysis v32: dominant=single, trend=stabilizing

— Dynamics Adaptation v34 (cached): tone=neutral, pacing=maintain

— Mode: Chitchat

Dyad Formation — When the Relationship Emerges

The critical moment arrives when a second emotion compounds with the first. This is when Plutchik’s dyadic model activates — and the engine shifts from detection to interpretation.

{

“primary_emotion”: “anticipation”,

“secondary_emotion”: “trust”,

“dyad_name”: “hope”,

“dyad_type”: “secondary”,

“dynamic_pattern”: “reinforcement”,

“valence”: 0.5,

“arousal”: 0.4,

…

}

— Playbook dyad routing: hope (secondary)

— Emotion Blend v32: anticipation+trust=hope (reinforcement) [+0.5/]

— Dynamics Adaptation v34 (cached): tone=more_enthusiastic, pacing=accelerate

— Mode: Chitchat

The 24 Dyads: What the Engine Is Listening For

Plutchik’s psychoevolutionary model identifies 8 primary emotions. In combination, they generate 24 distinct dyads — each with its own emotional signature, valence, and behavioral implication. The ConsentPlace engine monitors all 24, continuously, across every conversational turn.

In the demo above, Hope activated. In a different conversation — same brand, different user — Remorse or Cynicism might dominate. Each dyad triggers a different playbook. Each playbook adapts tone, pacing, CTA framing, and next-move strategy accordingly.

Every Signal the Stream Surfaces

| Signal | What It Measures | Action Triggered |

|---|---|---|

| primary_emotion | Dominant emotion detected in the message | Initial playbook routing |

| secondary_emotion | Compounding emotion forming a dyad | Dyad activation, deeper playbook |

| dyad_name | Named emotional state (e.g. Hope, Remorse) | Specific response strategy unlocked |

| valence | Positive/negative orientation (–1 to +1) | Tone calibration |

| arousal | Intensity of emotional engagement (0 to 1) | Pacing and urgency adjustment |

| dynamic_pattern | Single, reinforcement, conflict, or transition | Conversational arc management |

| uncertainty | Confidence level of the emotion read | Hedging vs. assertive framing |

| Dynamics Adaptation | Real-time tone + pacing instruction | Immediate assistant behavior change |

Transparency as a Differentiator

Every AI assistant adapts. Most adapt invisibly, using heuristics no one can inspect or validate.

ConsentPlace makes the adaptation visible — not only in the Dashboard metrics (LDP, ECI, DSR), but in the raw signal layer itself. The Raw Interaction Stream is a proof-of-work: every adaptation the assistant makes is traceable to a specific emotional signal, a specific dyad, a specific playbook decision.

The assistant doesn’t guess. It reads. And the stream shows you exactly what it read.

This matters for enterprise buyers, compliance teams, and CX leaders who need to understand — and audit — how their AI is behaving with customers. It matters for brand managers who need to know whether the emotional relationship with their users is strengthening or eroding. And it matters for anyone who has ever wondered what an AI is actually doing when it decides to shift tone mid-conversation.

See the Stream Live

The Raw Interaction Stream is accessible in the ConsentPlace live demo — no login, no setup. Open the demo, click the >_ button in the top-right corner of the interface, and watch the engine work in real time.

Try switching personas. Try different entry contexts. Watch how the stream changes — how quickly a dyad forms, how the playbook routing shifts, how the tone adaptation responds to a single word of frustration or enthusiasm in the user’s message.

This is not a visualization built for show. It is the actual signal layer the Emotional Dynamics engine runs on.

The conversation is on the surface.

The intelligence is in the stream.

Open the live demo and click >_ to see it for yourself.

Open the Dashboard → Full Auto-Demo Contact UsReferences & Sources

- Plutchik, R. (1980) — “A general psychoevolutionary theory of emotion”

- Plutchik, R. (2001) — “The Nature of Emotions.” American Scientist, 89(4), 344–350

- How to Measure Emotional Dynamics ROI: The 3 Metrics That Matter — ConsentPlace Blog, March 2026

- ConsentPlace Conversational Intelligence Dashboard (demo)