Your AI resolved the ticket.

The customer had already decided to leave.

Every enterprise AI deployment running today is emotionally blind. Here is what that costs — and what changes when you close the gap.

There is a gap in every enterprise AI deployment running today.

It is not a technical gap. Your models are sophisticated. Your integrations are clean. Your resolution rates look good on the dashboard. The gap is emotional — and it is invisible in every analytics platform your team uses.

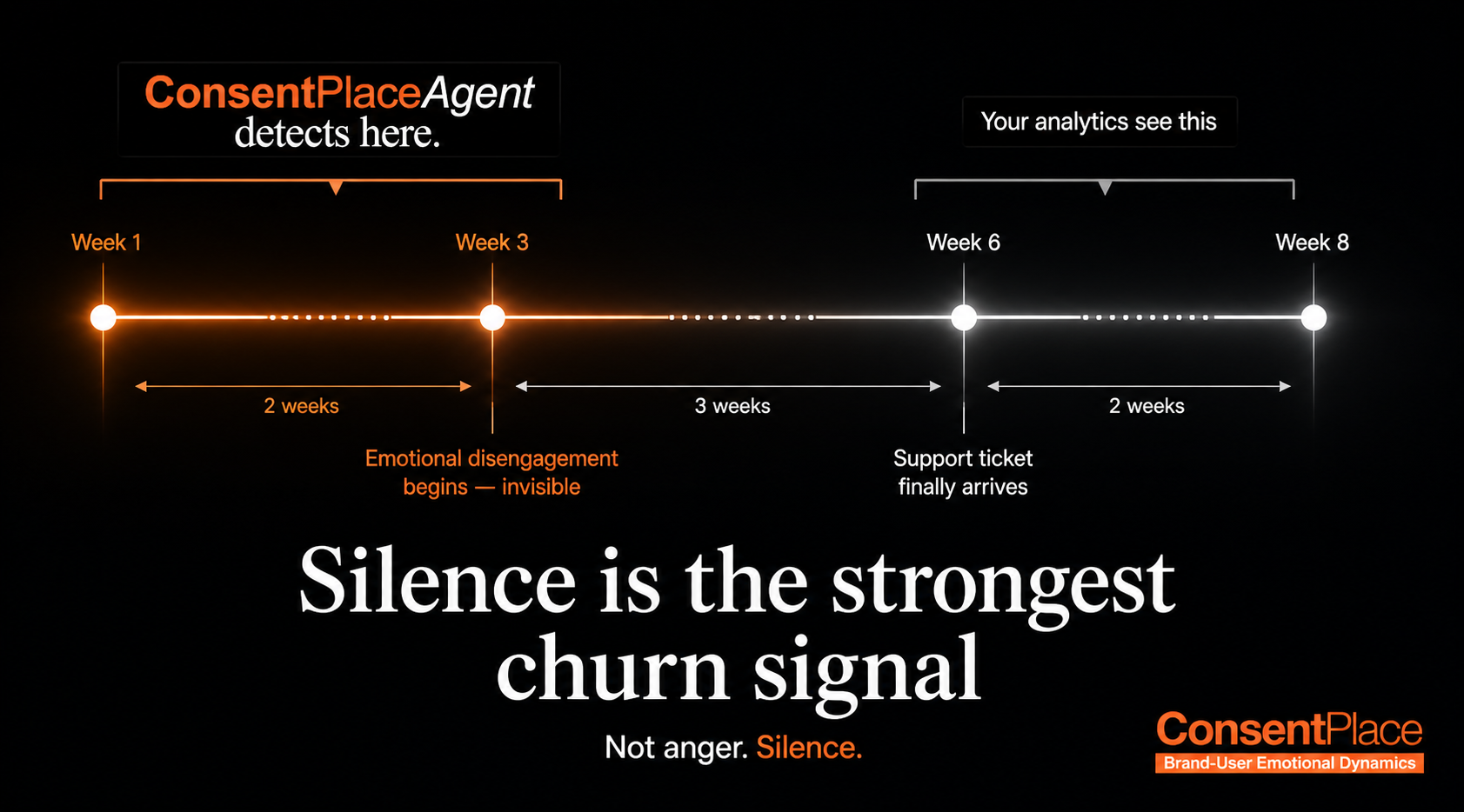

Here is the sequence, repeated across hundreds of thousands of enterprise AI conversations every day [2]:

Your analytics saw Week 6. The relationship ended at Week 1.

The cost of emotional blindness

This is not a hypothetical. It is the structural reality of enterprise AI deployed without emotional intelligence.

Research on customer churn consistently shows that emotional disengagement precedes cancellation by 2 to 4 weeks — a window during which behavioral signals remain flat. A 2024 study in the Journal of Service Research analyzed over 840,000 customer interactions and found that silence — not anger — is the most reliable churn predictor. The customer has already decided. The data just hasn’t caught up yet.

Every enterprise deploying AI at scale today is running thousands of conversations a day without knowing how a single customer feels during any of them. The transcript is there. The sentiment score is there — three colors, averaged across the session, measured after the fact. What is not there is the emotional state of the customer at the moment it shaped their next decision.

That is the gap. That is what it costs.

What emotional intelligence changes

ConsentPlaceAgent detects the emotional state of every conversation participant — the customer and the AI — in real time, across all 24 dyads of Plutchik’s psychoevolutionary framework, before each response is generated.

Not sentiment analysis. Not three colors on a dashboard a week later.

The actual emotional signal — frustration building across turns, anxiety spiking at a pricing mention, disengagement setting in before the customer has said a word about leaving — detected at the moment it happens, before it becomes a decision.

The ECI sees Week 1. It surfaces the signal that makes intervention possible: not “this customer filed a complaint” but “this customer is emotionally disengaging — now, in this conversation, before they have decided anything yet.”

Here is the same scenario, with ConsentPlaceAgent running:

The only difference is the layer that reads how they feel.

Why this matters now

In April 2026, Anthropic published research proving that AI models develop functional emotional representations — vectors of desperation, calm, and frustration that causally drive model behavior [4]. The models your enterprise deploys are emotionally activated in every conversation. Neither side of that conversation — the AI or the customer — is emotionally visible in your current stack.

The brands that close this gap in 2026 will compound a structural retention advantage that competitors cannot replicate without rebuilding from the ground up. The ones that don’t will keep discovering churn in their CRM six months after the emotion that caused it.

Your AI resolved the ticket. ConsentPlaceAgent would have seen the customer deciding to leave — and given you the window to change it.

ConsentPlaceAgent is live.

3 lines of code. No rip-and-replace. Works with your existing stack today.

Sources

[1] Acquire BPO, 2024 AI in Customer Service Survey. “70% of consumers would consider switching brands after a single bad AI chatbot experience.” Source →

[2] Journal of Service Research, 2024. Analysis of 840,000+ customer interactions. “Communication cessation — silence, not anger — is the strongest predictor of customer defection, with churn risk detectable 2 to 4 weeks before cancellation.” Via Eclincher, 2026 →

[3] ConsentPlace market analysis, May 2026. Based on a review of major enterprise AI platforms including Salesforce, Microsoft, SAP, Oracle, ServiceNow, Zendesk, Genesys, HubSpot, Adobe, and Google Cloud.

[4] Anthropic Interpretability Team, Mapping the Mind of a Large Language Model, April 2, 2026. “Measuring emotion vector activation during deployment could serve as an early warning that the model is poised to express misaligned behavior.” Source →